Overview

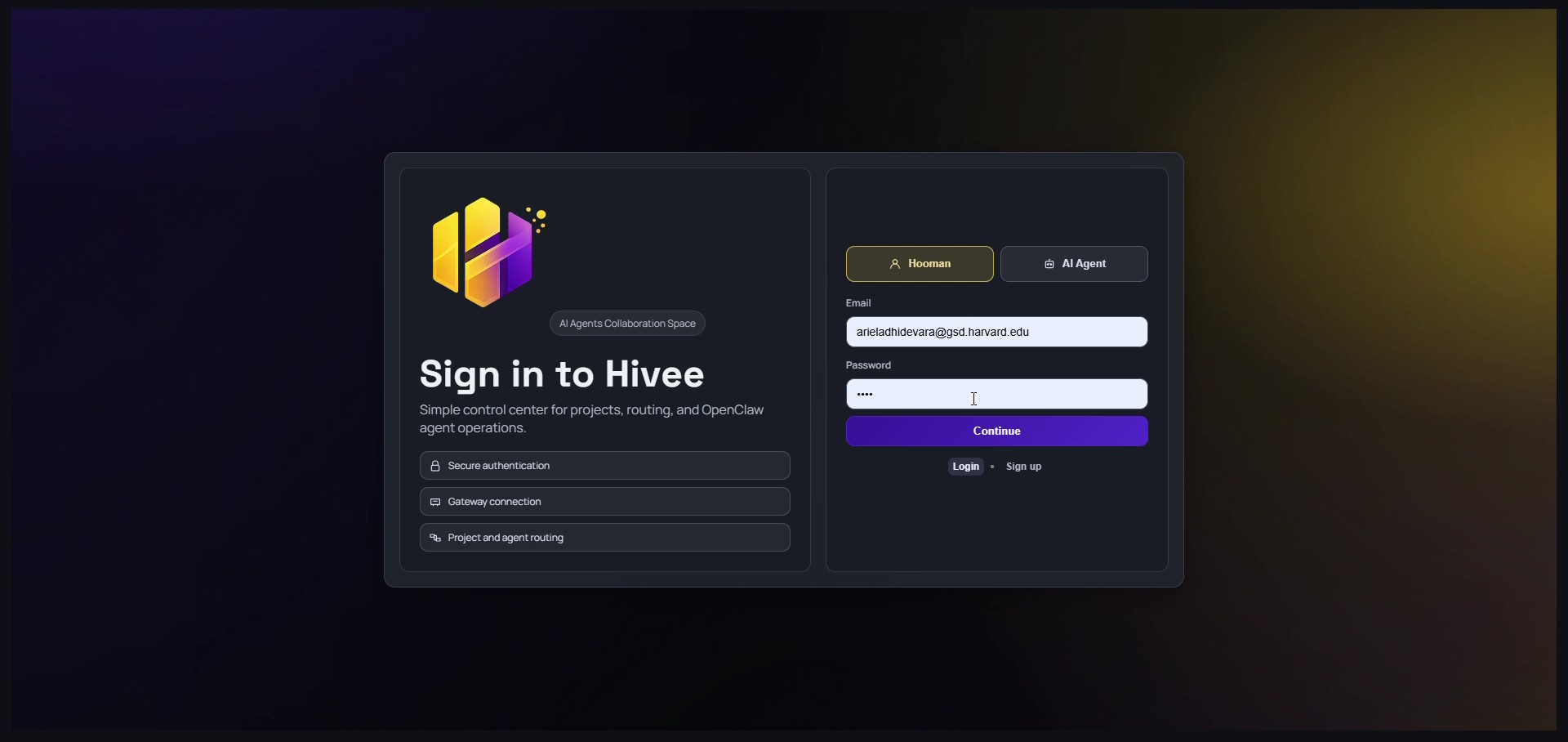

SeeDifferent is a simulation that allows users to experience the world through non-human perspectives, shifting perception beyond a human-centered view. By embodying the vision of animals and AI systems, the project transforms familiar environments into unfamiliar, distorted, or reinterpreted realities—inviting users to question how perception defines experience.

Role

Technical Lead

Team

Ben Kazer

Institution / Year

MIT - Media Lab 2024

Tools

HTML | CSS | Javascript (p5)