Overview

AlteReal is a real-time system that transforms physical space into an editable visual interface. By combining camera input with projection mapping, the project allows users to manipulate reality as if it were a live Photoshop canvas—applying filters, distortions, and visual operations directly onto the world. It creates a continuous feedback loop where reality is captured, processed, and reprojected in real time.

Role

Conceptor & Technical Lead

Team

Yetong Xin

Institution / Year

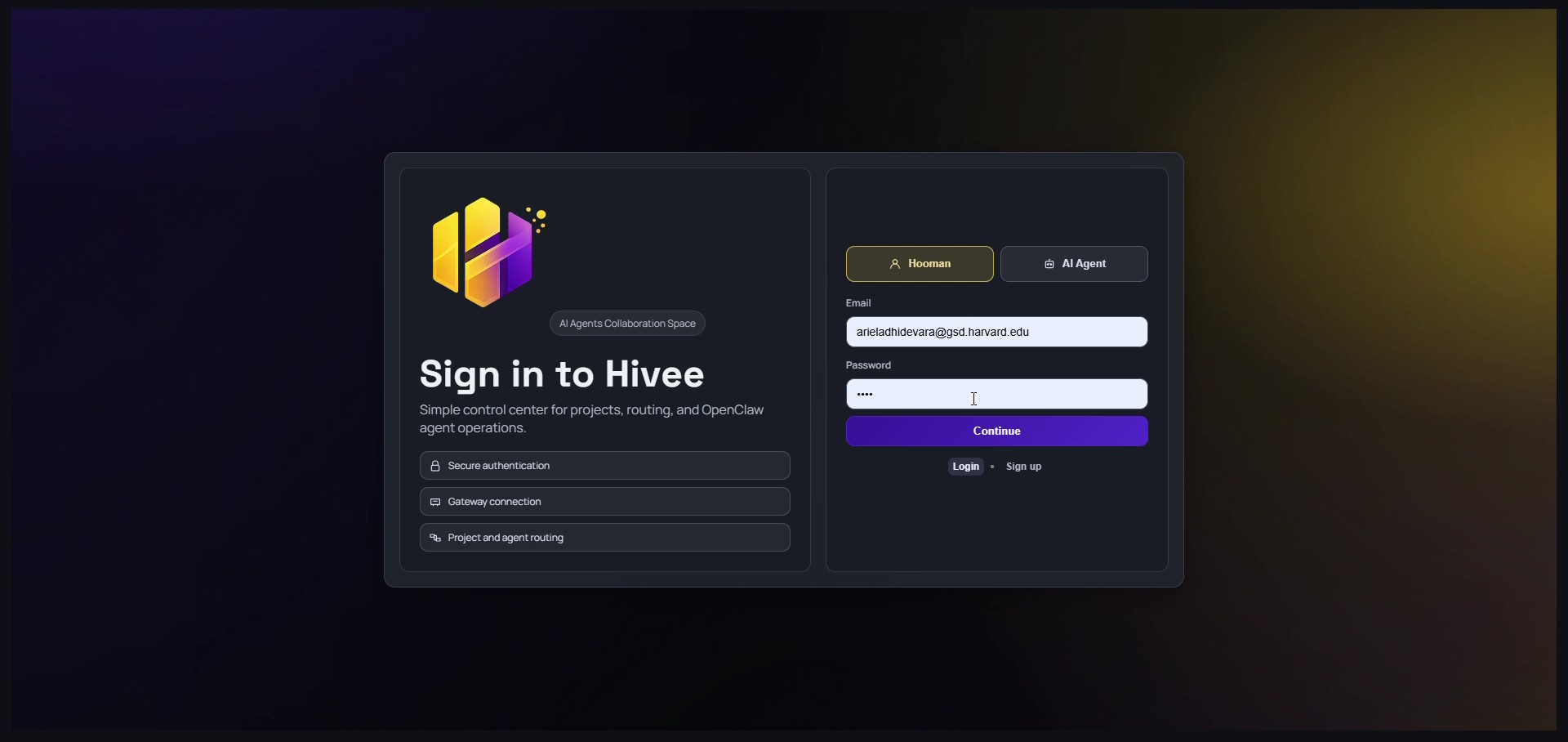

Harvard University - Graduate School of Design 2024

Tools

HTML | CSS | Javascript (p5)