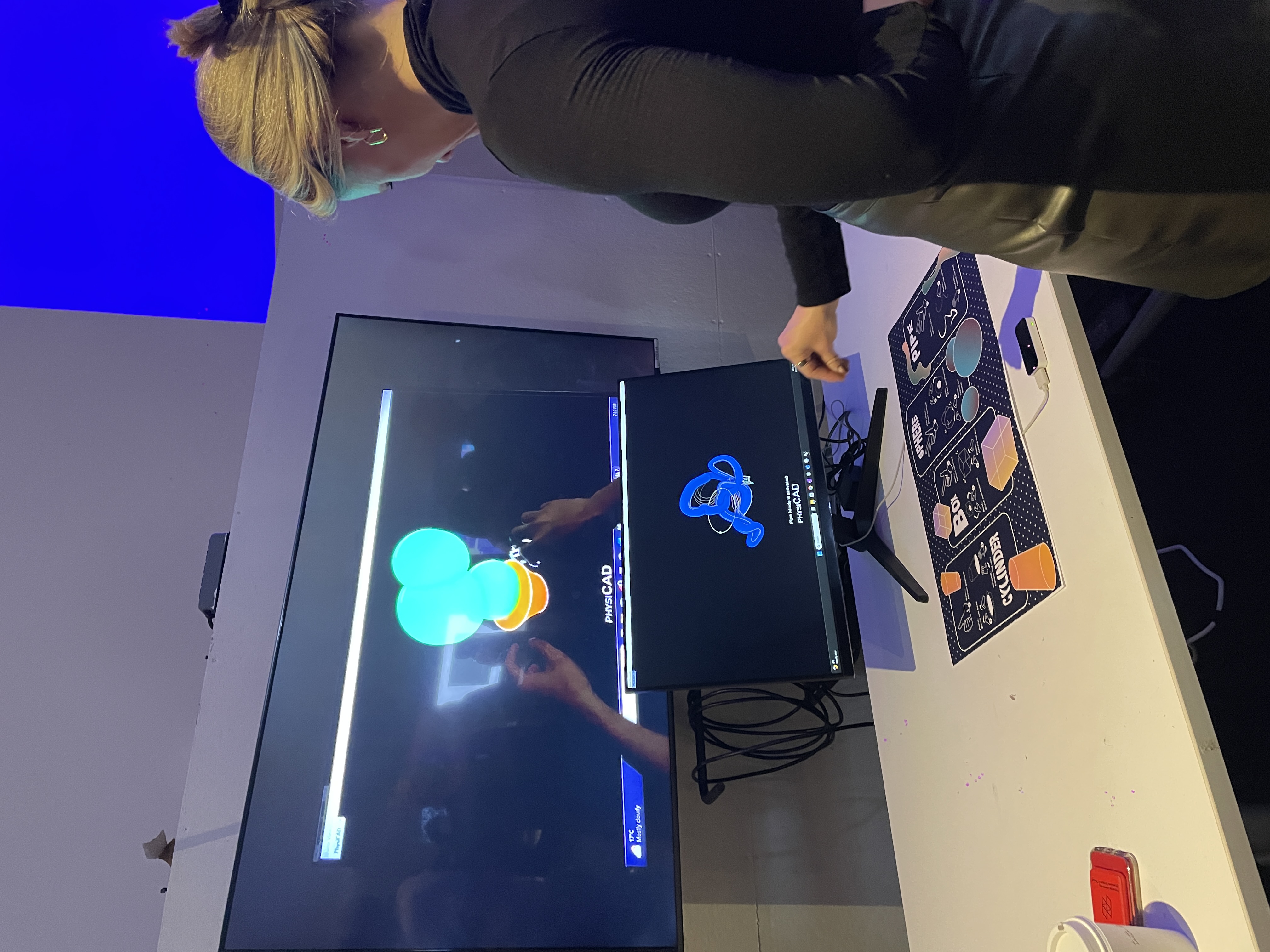

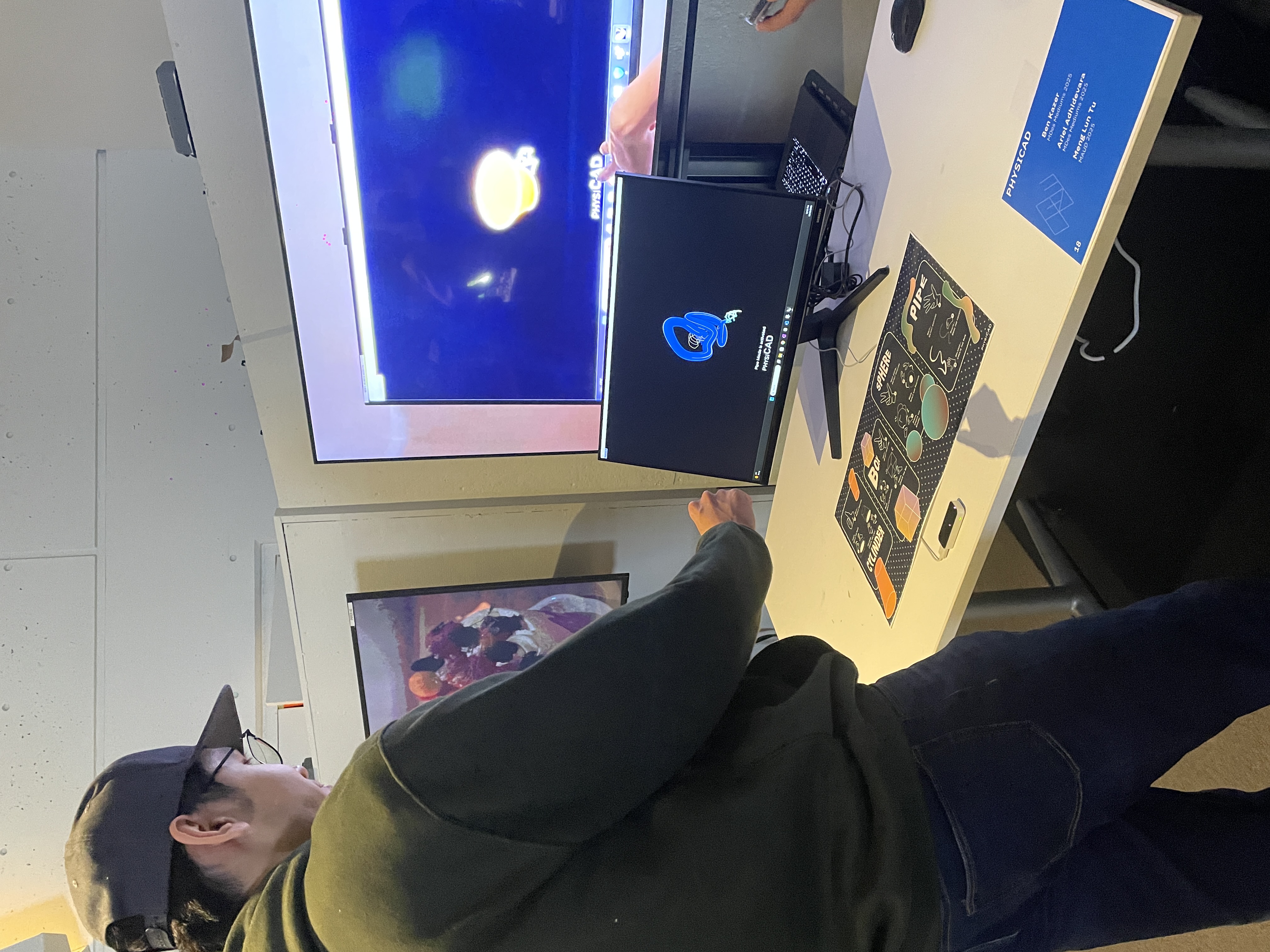

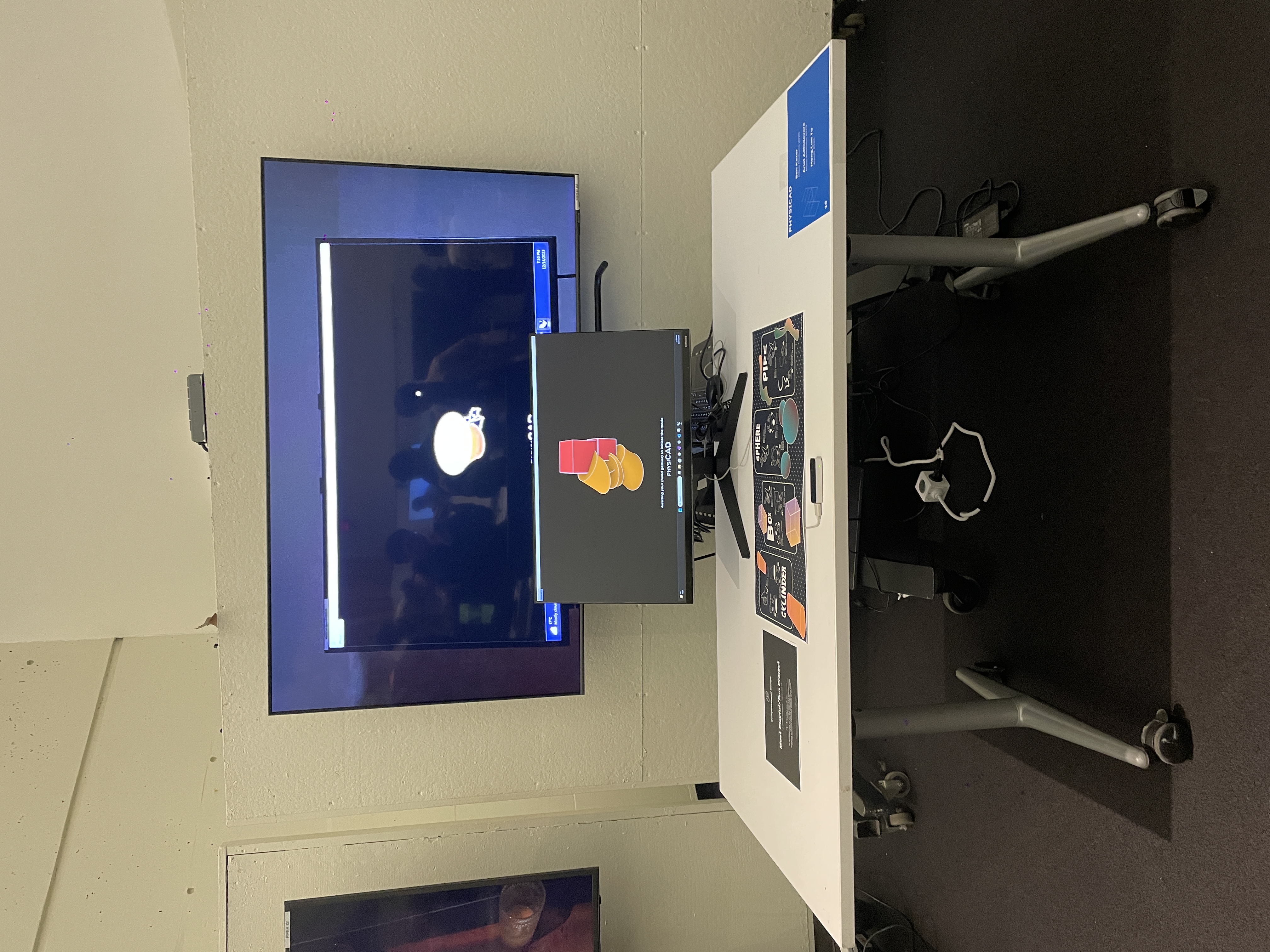

Overview

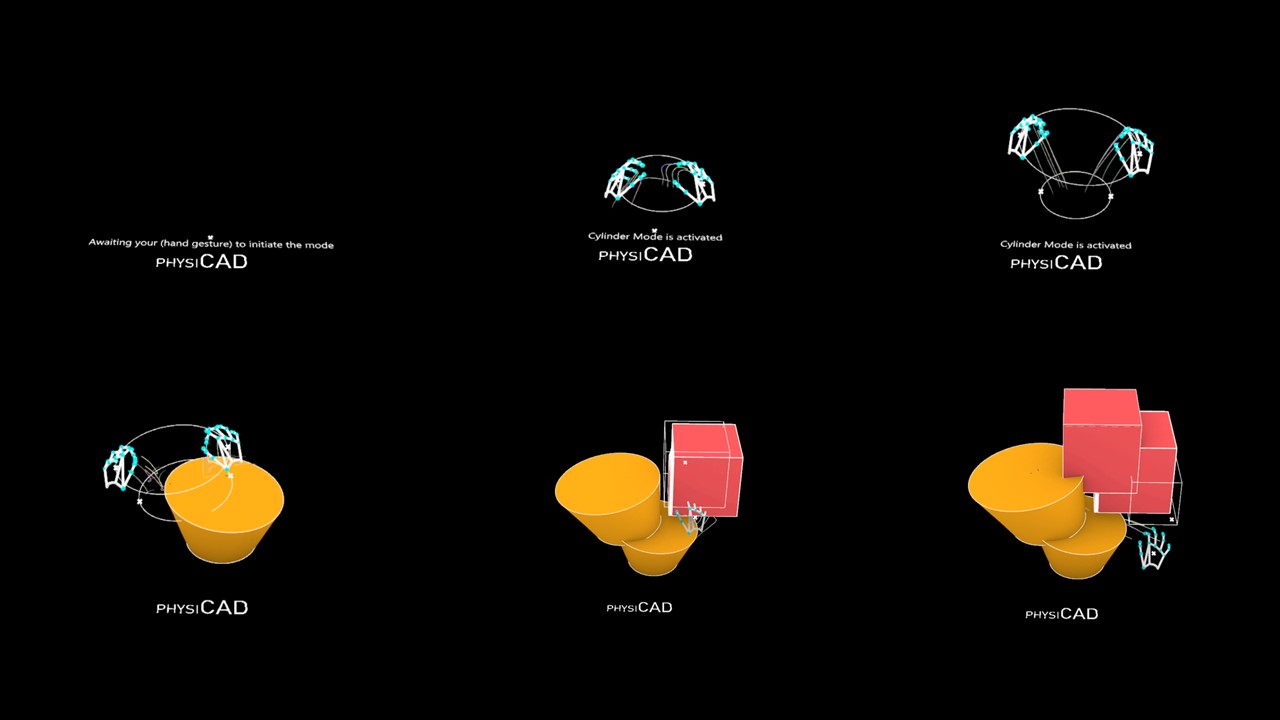

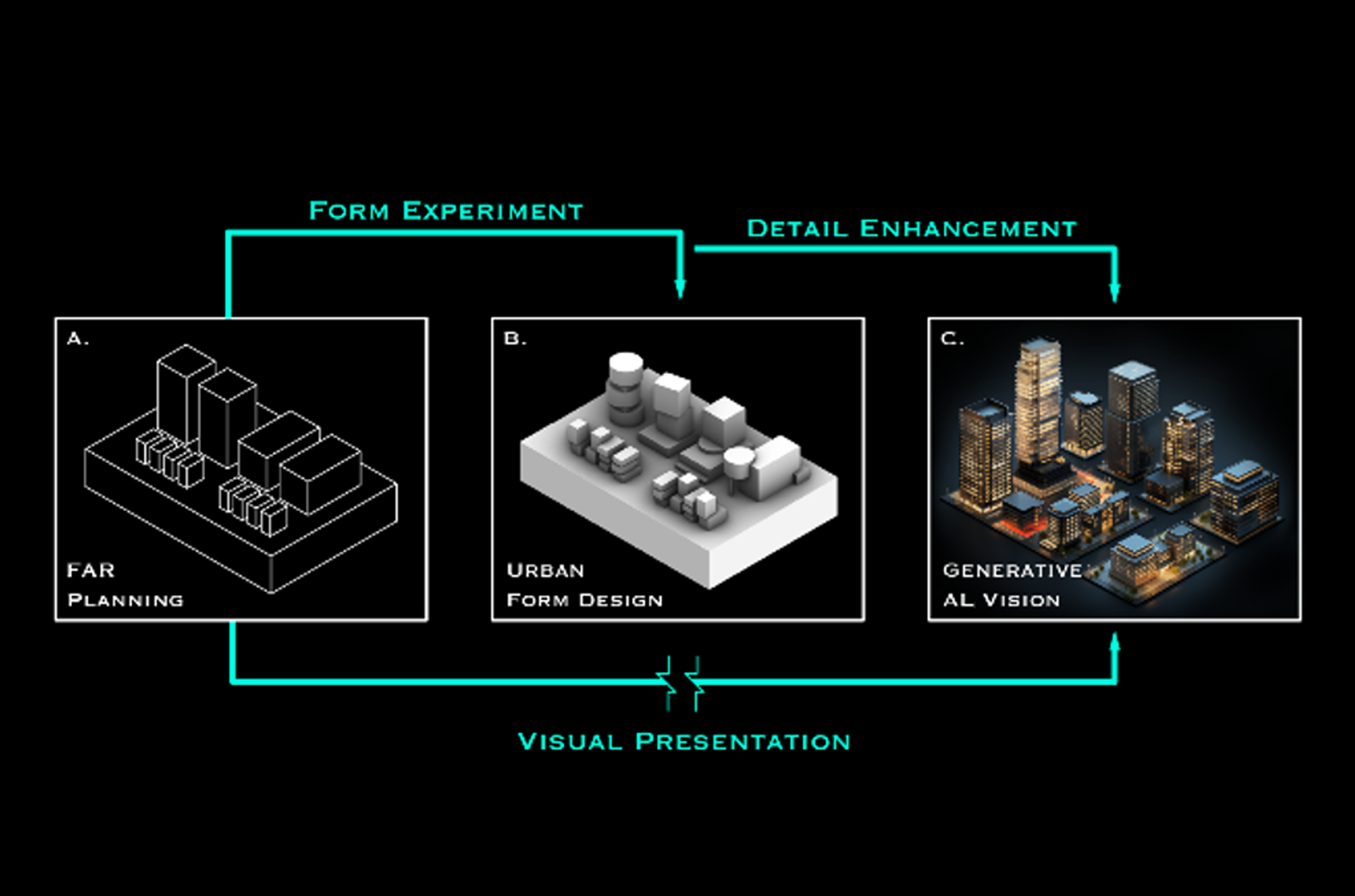

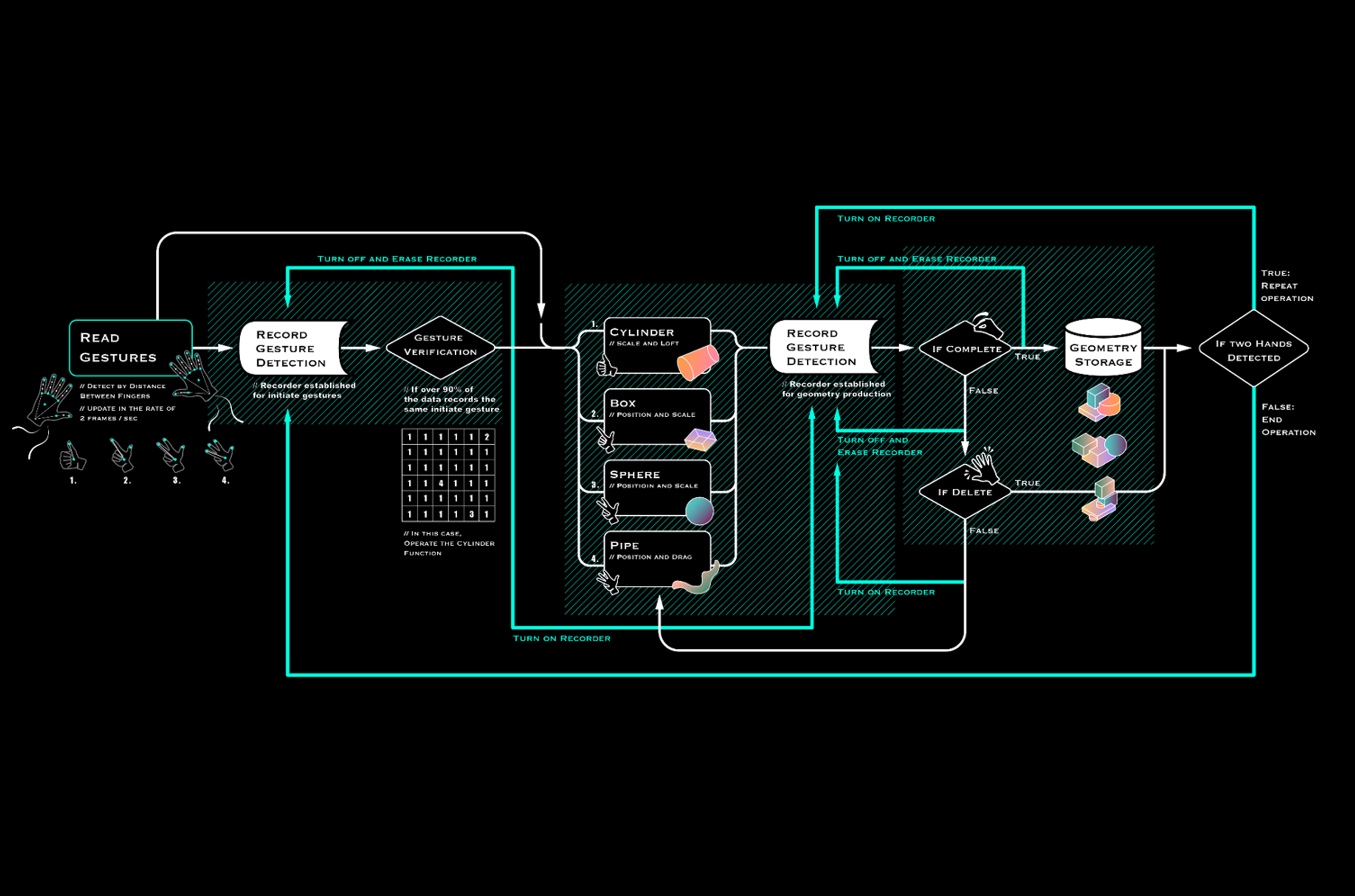

PhysiCAD is an interactive interface that enables users to create 3D models through physical gestures. By translating hand movements into digital geometry, the system reimagines 3D modeling as an embodied and intuitive experience rather than a technical skill. It lowers the barrier to entry while maintaining the precision and logic required for computational design.

Role

Interaction & Technical Lead

Team

Ben Kazer, Joe Tu

Institution / Year

Harvard University - Graduate School of Design 2023

Tools

Grasshopper | C#